A few weeks ago I was contacted via Twitter to see if I was interested in attending a bay area “tech/gamer blogger event”. I was a little bit suspicious, but eventually I acquiesced (thanks to John’s constant harassing). As it turns out, I got an invite to the “AMD Experience aboard the USS Hornet“. I didn’t know what to expect because I wasn’t told anything about it in advance.

So last night I went off to visit the USS Hornet, which I had visited previously about a month ago (and never got around to blogging about it). I got accosted by a security guard asking if I was “here for the event”, sigh. I fully expected that and I’m used to it, so It doesn’t upset me. I got inside to find that they had turned off all the regular lighting and replaced it with what I can best describe as “mood lighting”. It was amusing, but made it somewhat difficult to get any decent photography done for the evening. Not that it stopped me because I have ISO 6400 to abuse on my camera, and abuse it I did.

It was kind of them to give us “community members” (nice way of saying “Blogger”), and I presume everyone else, some swag on entry. The swag included ATI Agent Ruby, a 1GB USB Dogtag and the AMD Experience Badge (pictured above left). We were sequestered in the lobby for a bit while the press conference (which was running late) finished up. After a sluggish start, we got down to the pilots briefing room (apt, if I do say so myself) where they gave us “Community members” a briefing on AMD’s new toys. Shockingly enough, the dozen or “Community members” were all male.

So what did AMD tell us about?

Their new DirectX 11 compatible series of graphics cards. Specifically the ATI Radeon HD 5000 Series. Yea, it’s not out yet, and won’t be until later this month. They talked about the entire series, the new technology coming with it, and some of the cool features of DirectX 11. Let me tell you, I enjoy shiny new toys, but not too often am I impressed by new computer components. I mean, they go faster, have more memory, cost your first born… nothing fantastic about that.

That being said… I’m fairly impressed with this new card, that they are calling the Radeon 5870 (could have a different name at release, but I doubt it).

The overview: First DirectX 11 cards; Supports new ATI Eyefinity Technology; Supports new ATI Stream Technology; Support for 6 (yes, SIX displays) screens and a max resolution up to 7680 x 3200. Oh, and it will probably cost your first born still (that’s my guess, they didn’t talk about prices or any hardware specs).

They are also including oodles of new technology including something that I think will be very important: Single Large Surface. Basically you can group the displays together so they show up as one large surface in Windows. Without any patches or special configuration you could run World of Warcraft or any other stock (off the shelf) game on 6 screens at asininely high resolutions. And that is just what it can do out of the box.

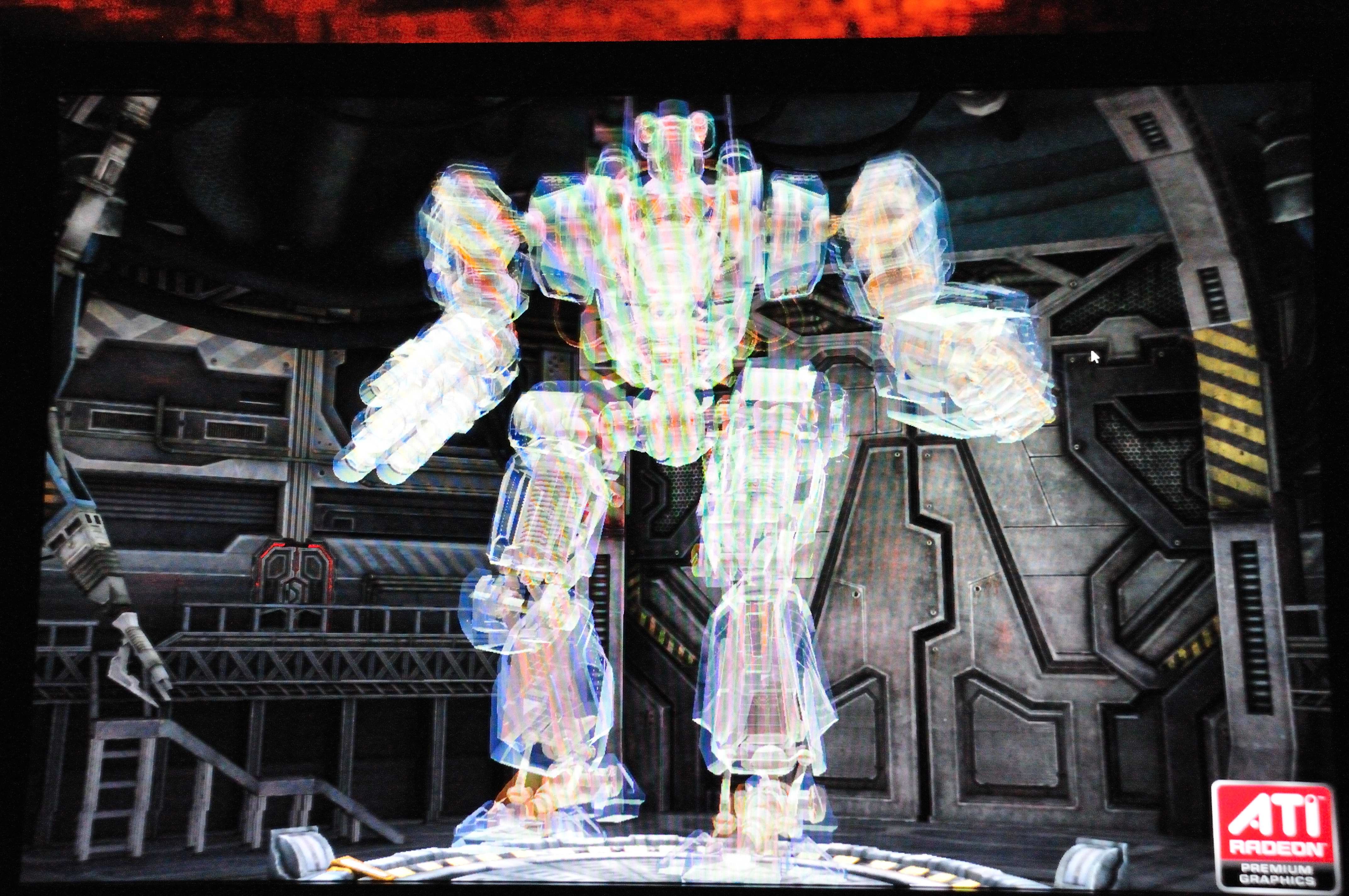

ATI also worked heavily to include useful technology like support for OpenCL and DirectCompute from DirectX 11. They demo’d extremely impressive uses for these technologies. First was a real time Depth of Field demonstration. You know how you look down the scope of a gun in a video game and a small portion of the screen is “visible” and the rest is fuzzed over — that is supposed to be depth of field. Really the games are just laying over a filter or what not to make certain parts fuzzy. With this new tech from ATI they gave TRUE depth of field, which slowly got more and more out of focus (like it would on a camera at F/1.4 for example). Another demo they gave was 6,000 “bodies” (basically bouncy balls) on screen doing ultra real physics, smoothly (all powered off of the CPU/GPU combo). Last was a demo of OIT — Order Independent Transparency. They showed off a robot which was totally translucent, it looked like a hologram if you will. You could see the background through it and it was running smoothly. I tried to get a picture of it, but… taking a picture of a computer screen doesn’t convey it too well.

I’ve got a bunch more — but I think I will save it for Monday because this post is lengthy enough, etc, etc, and slightly… late.